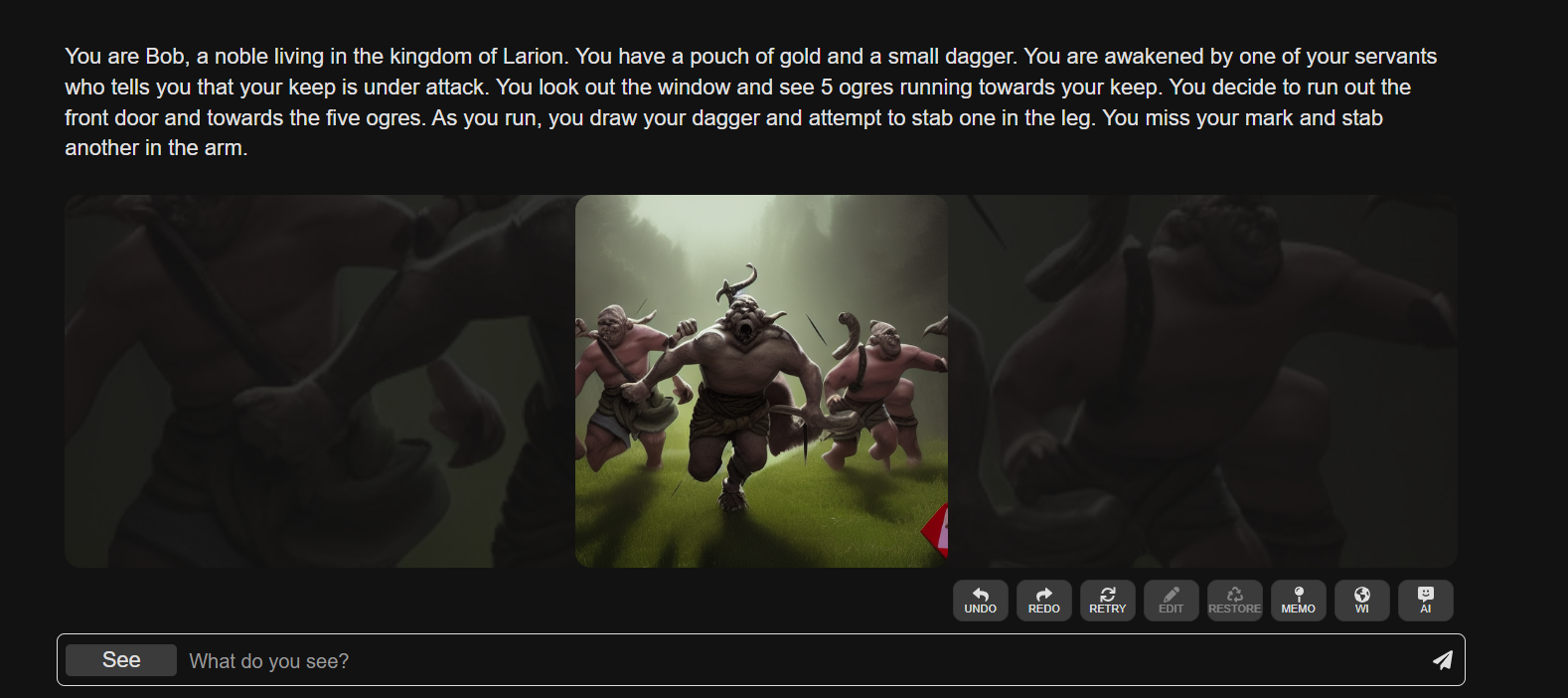

AI Dungeon realized the dream many avid gamers have had as a consequence of the ’80s: an evolving storyline that gamers themselves create and direct. Now, it’s going further with a mannequin new function that permits gamers to generate photographs that illustrate these tales.

Developed by indie sport studio Latitude, which was initially a one-particular person operation, AI Dungeon writes dialogue and scene descriptions using thought of one of a quantity of textual content material-producing AI fashions — permitting gamers to answer occasions how they choose (inside set off). It stays a bit in progress, however with the emergence of picture-producing methods like Stability AI’s safe Diffusion, Latitude is investing in new methods to brighten up gamers’ narratives.

all by way of the AI Dungeon UI, you presumably can choose a “See” selection at any time to immediate safe Diffusion to generate an illustration.

entry requires a subscription to thought of one of Latitude’s premium plans, which begins at $9.ninety nine per thirty days. It’s a credit rating-based mostly system — producing an picture prices two credit, with credit rating limits starting from 480 per thirty days for the most affordable plan to 1,650 for the priciest ($29.ninety nine per thirty days). On the AI Dungeon consumer out there by way of Valve’s Steam market, which is priced at $30, members get 500 credit with their buy.

“With safe Diffusion, picture know-how is quick enough and low-cost enough to current custom-made picture know-how to all people. picture know-how is pleasing by itself, and having the means to create custom-made photographs to go collectively with your AI Dungeon story was a no brainer,” Latitude senior advertising director Josh Terranova instructed TechCrunch through e-mail.

not like picture-producing methods of a comparable constancy (e.g., OpenAI’s DALL-E 2), safe Diffusion is unrestricted in what it’d presumably create excepting the variations served by way of an API, like Stability AI’s. educated on 12 billion photographs from the internet, it’s been used to generate artwork work, architectural ideas and photorealistic portraits — however additionally pornography and film star deepfakes.

Latitude hopes to lean into this freedom, permitting clients to create “NSFW” photographs, collectively with nudes, as prolonged as they don’t make them public. AI Dungeon’s constructed-in story-sharing mechanism is at present disabled for tales containing photographs — a step Terranova says is important whereas Latitude “decide[s] out the exact expertise and safeguards.”

picture credit: Latitude

That’s taking an monumental hazard. Latitude landed in scorching water a quantity of years in the past when some clients confirmed that the sport may very properly be used to generate textual content material-based mostly simulated baby porn. the agency carried out a moderation course of involving a human moderator studying by way of tales alongside an automated filter, however the filter usually flagged false positives, ensuing in overzealous banning.

Latitude finally corrected for the moderation course of’ flaws and carried out an acceptable content material coverage — however not till after some extreme evaluation bombing and unfavourable publicity. desirous to hold away from the identical destiny, Terranova says that Latitude is taking steps to “sensibly” curate AI-generated photographs whereas affording gamers inventive expression.

“we’re working with Stability AI, the makers of safe Diffusion, to make sure measures are in place to cease producing sure styles of content material — primarily content material depicting the sexual exploitation of kids. These measures would apply to each printed and unpublished tales,” Terranova said. “There are a quantity of unanswered questions with regard to the utilization of AI photographs that every one of us may be working by way of as AI picture fashions flip into extra accessible. As we be taught extra about how gamers will use this extremely effective know-how, we count on modifications may very properly be made to our product and insurance coverage policies.”

In my restricted experiments, the mannequin new safe Diffusion-powered function works — however not always properly, a minimal of not but. the footage generated by the system certainly mirror AI Dungeon’s imagined circumstances — e.g., an picture of a pirate in response to the immediate “You come throughout a captain” — however not in an identical artwork mannequin, and typically with particulars omitted.

picture credit: Latitude

for event, safe Diffusion was confused by one scene notably rich intimately: “You cover inside the bushes. you discover a gaggle of thugs, who’re carrying a bundle of money. You leap out and stab thought of one of many thugs, inflicting him to drop the bundle.” In response, AI Dungeon generated an picture of a swordswoman in a forest in direction of a backdrop of a metropolis — up to now so good — however with out the “bundle of money” in sight.

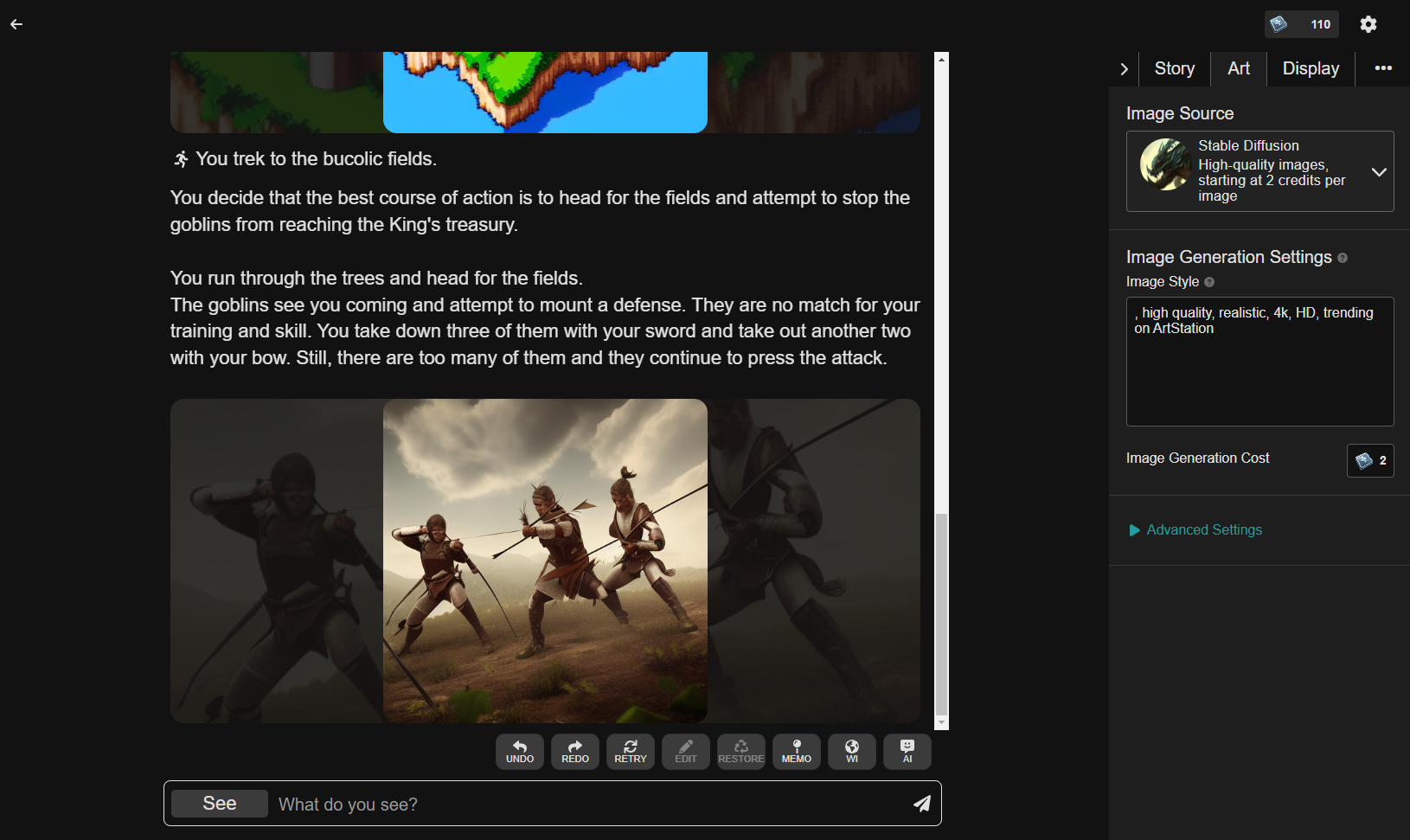

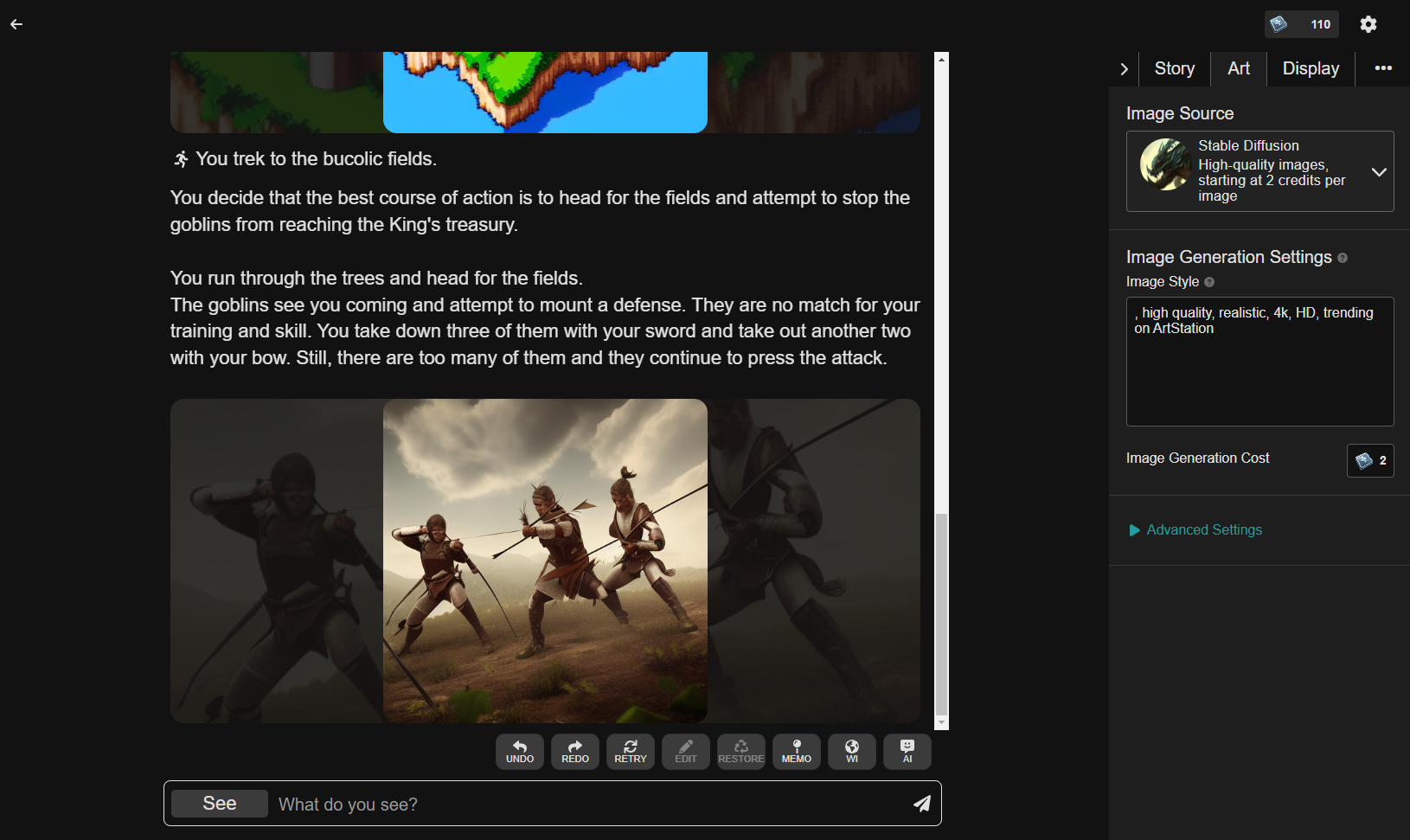

one other superior scene involving skirmishing goblins gave safe Diffusion problem. The system appeared to give consideration to express key phrases on the expense of context, producing an picture of warriors with bows as one other of goblins pierced by a sword and arrows.

AI Dungeon permits you to toggle the immediate to high quality-tune the outcomes, however it absolutely didn’t make an monumental distinction in my expertise. Edits wished to be extremely particular to have a lot of an impression (e.g., including a line like “inside the mannequin of H. R. Giger”), and even then, the impression wasn’t apparent past the coloration pallet. My hopes for a narrative illustrated fully by pixel artwork had been quickly dashed.

nonetheless, even when the scene illustrations aren’t completely on-matter or reasonable — assume pirates with sausage-like fingers standing the center of an ocean — there’s one factor about them that give AI Dungeon’s storylines larger weight. maybe it’s the emotional impression of seeing characters — your characters — dropped at life in a method, engaged in battling or bantering or no matter else makes its method proper into a immediate. Science has found as a lot.

What with regard to the — ehem — much less SFW facet of safe Diffusion and AI Dungeon? properly, that’s extremely effective to say, as a consequence of it’s nonfunctional for the time being. When this reporter examined a decidedly NSFW immediate in AI Dungeon, the system returned an error message: “Sorry however this picture request has been blocked by Stability.AI (the picture mannequin supplier). we’ll permit 18+ NSFW picture know-how as quickly as Stability permits us to regulate this ourselves.”

picture credit: AI Dungeon

“[The] API has always had the identical NSFW classifier that the official open supply launch/codebase has inside the default set up,” Emad Mostaque, the CEO of Stability AI, instructed TechCrunch when contacted for clarification. “[It] may be upgraded quickly to a larger one.”

Terranova says that Latitude has plans to develop picture know-how with rising AI methods, maybe sidestepping these kinds of API-stage restrictions.

With time, i assume that’s an thrilling future — assuming that the commonplace improves and objectionable content material doesn’t flip into the norm on AI Dungeon. It previews an complete new class of sport whose artwork work is generated on the fly, tailored to adventures that gamers themselves dream up. Some sport builders have already begun to experiment with this, using generative methods like Midjourney to spit out artwork for shooters and choose-your-personal journey video games.

however these are large ifs. If the previous few months are any indication, content material moderation will show to be a problem — as will fixing the technical factors that proceed to journey up methods like safe Diffusion.

one other open question is whether or not or not gamers may be eager to stomach the worth of absolutely illustrated storylines. The $10 subscription tier nets 250 illustrations or so, which isn’t a lot contemplating that some AI Dungeon tales can stretch on for pages and pages — and contemplating that artful gamers may run the open supply mannequin of safe Diffusion to generate artwork work on their very personal machines.

In any case, Latitude is intent on charging full steam forward. Time will inform whether or not that was sensible.

0 Comments